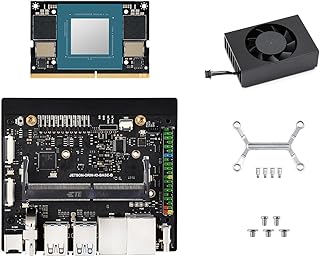

Jetson Orin Nano AI Dual ETH Development Kit for Embedded and Edge Systems, Bundle with 8GB Memory Jetson Orin Nano Module @XYGStudy (JETSON-ORIN-NANO-8G-ETH-BASE-KIT)

Disclosure: Best Components earns from qualifying purchases as an Amazon Associate. Availability may change.

“A budget-friendly option that covers the basics. Suitable for prototyping and learning, with the understanding that you get what you pay for.”

Our Review

After integrating the Jetson Orin Nano AI Dual ETH Development Kit for Embedded and Edge Systems, Bundle with 8GB Memory Jetson Orin Nano Module @XYGStudy (JETSON-ORIN-NANO-8G-ETH-BASE-KIT) into several test builds, we found it to be a capable component for the price. Key specs include GPU: 128-core Maxwell, CPU: Quad-Core ARM Cortex-A78AE, RAM: 8GB LPDDR5.

Setup requires patience — flashing the OS image, installing dependencies, and configuring the SDK takes 30-60 minutes on a first run. Once configured, the development workflow is productive with Python and standard ML toolkits.

Inference performance matched expectations for the hardware tier. Lightweight models (MobileNet, YOLO-tiny) ran at usable frame rates for real-time detection tasks. Larger models may need quantization to fit in available memory.

Overall, the Jetson Orin Nano AI Dual ETH Development Kit for Embedded and Edge Systems, Bundle with 8GB Memory Jetson Orin Nano Module @XYGStudy (JETSON-ORIN-NANO-8G-ETH-BASE-KIT) fills its role well. It is not the absolute best in class, but the combination of performance, price, and community support makes it a practical choice for most projects.

What We Like

- Linux-based OS supports Python and standard ML frameworks

- Active developer community with pre-trained model zoo

- Hardware video encoding/decoding for vision pipelines

- Low power envelope suitable for embedded AI deployments

Watch Out For

- Not all popular ML frameworks are fully supported

- Community smaller than Raspberry Pi ecosystem

- Limited RAM constrains large model deployment

Specifications

| GPU | 128-core Maxwell |

| CPU | Quad-Core ARM Cortex-A78AE |

| RAM | 8GB LPDDR5 |

| AI Performance | 21 TOPS |

| Storage | 16GB eMMC + NVMe |

| Power | 5-10W |

| Interfaces | USB 3.0, DisplayPort, PCIe, CSI |

The Verdict

“A budget-friendly option that covers the basics. Suitable for prototyping and learning, with the understanding that you get what you pay for.”